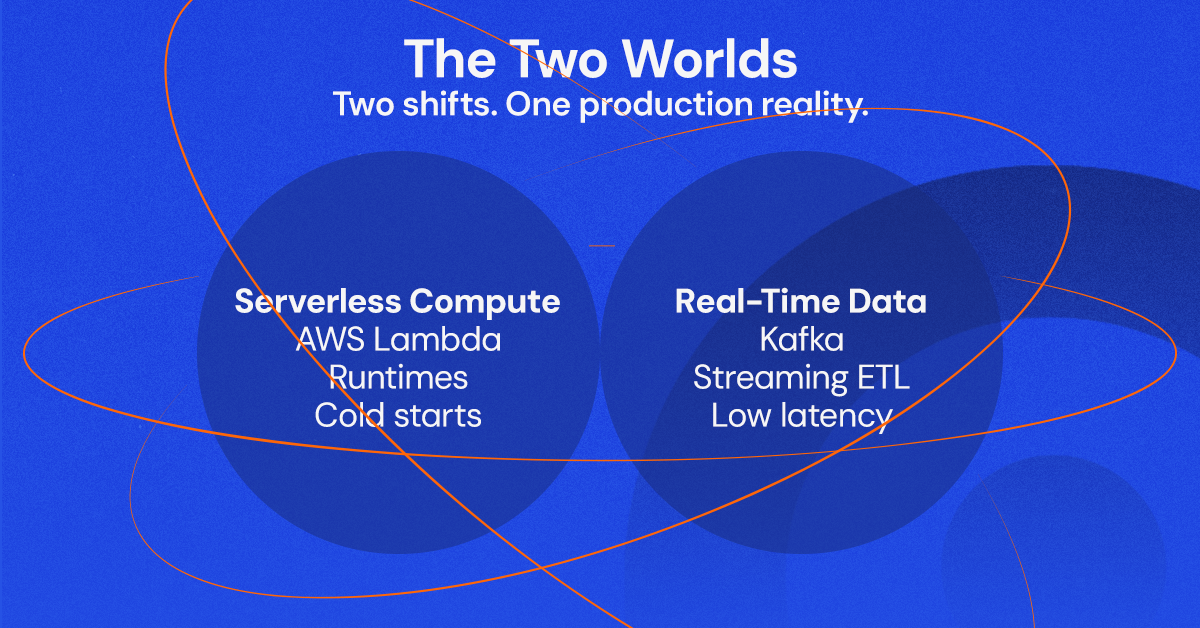

In the modern cloud, we are constantly balancing two massive shifts: the move to Serverless compute and the demand for Real-Time data. But statistics and “Hello World” tutorials are one thing; production is another.

Recently, two of our engineers, Stefan Ignatescu and Robert Haisuc, took the stage to break down the reality of building on AWS. One tackled the “Holy War” of Lambda Runtimes (Java vs. Python vs. GraalVM), while the other dove deep into the trenches of Streaming ETL with Kafka and AWS Glue.

Here is the honest breakdown of what works, what costs money, and the specific bugs that kept us up at night.

Part 1: The Lambda Runtime Showdown

To JVM or not to JVM? That is the expensive question.

AWS Lambda promises a lot: pay-per-use, automatic scaling, and zero server management. But when you are building enterprise apps, choosing the right language runtime isn’t just a preference, it’s a business decision regarding latency and cost.

We compared three contenders: Scripting (Python/Node), Standard JVM (Java/Kotlin), and GraalVM.

1. The "Cold Start" Reality

We ran benchmarks to see how fast these runtimes wake up.

- Scripting (Python/Node): The clear winner for startup speed. It’s lightweight and ideal for frequent, bursty tasks.

- The JVM: It’s reliable and mature, but it’s heavy. Initialization is slower, leading to the dreaded "Cold Start" latency.

- The GraalVM Compromise: By compiling Java to a native image, we saw significantly faster cold starts compared to the standard JVM.

2. Performance vs. Complexity

If your function runs for a long time (CPU-intensive tasks), the JVM shines. However, GraalVM introduces a “tax” on the developer: complex build processes, harder debugging, and library compatibility issues.

Our Verdict:

- Use Scripting for cost-sensitive, event-driven functions where every millisecond of startup counts.

- Use JVM/GraalVM for complex, enterprise-grade workflows where strong type safety and sustained performance outweigh the setup complexity.

Part 2: Streaming Data (Without Drowning)

Moving from “We’ll see the data tomorrow” to “We see it now.”

While Lambda handles the logic, we need to move the data. We are seeing a massive shift from traditional Batch ETL (extract-transform-load) to Streaming ETL.

In our recent architecture for a client, we moved from processing files periodically to ingesting events in real-time using Apache Kafka and AWS Glue.

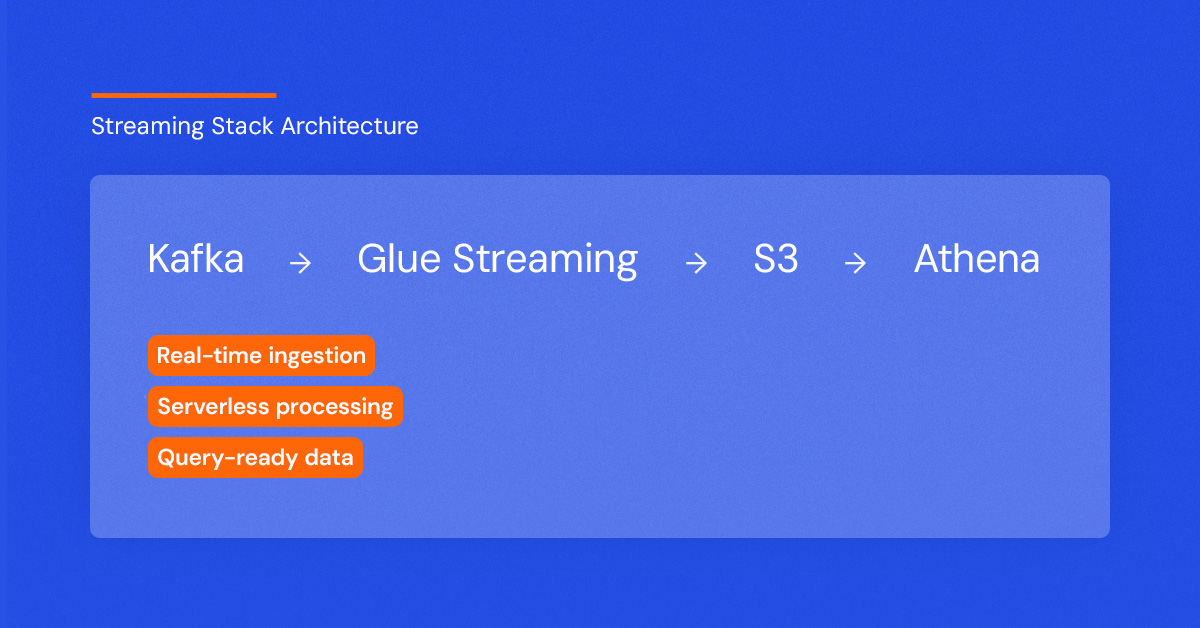

The Stack

- Source: Apache Kafka (for high-throughput event buffering).

- Processing: AWS Glue Streaming (Serverless Apache Spark).

- Storage: Amazon S3 (Partitioned by time).

- Consumption: Amazon Athena (SQL over S3).

Why Kafka + Glue?

We chose Kafka because it decouples producers from consumers and handles massive scale. We paired it with AWS Glue because it’s fully managed—we don’t want to manage Spark clusters manually.

The "War Story": The Networking Handshake from Hell

It wasn’t all smooth sailing. During development, we hit a wall connecting AWS Glue to our Kafka broker.

Kafka clients perform a “handshake” where they ask the controller for the leader of a partition. Our broker was returning localhost as its address. Since AWS Glue has no idea what “localhost” is in the context of our broker container, the connection failed silently.

The Fix: We had to explicitly configure advertised.listeners on the Kafka broker to return its reachable private IP, not localhost. Pro tip: Always check your networking configurations in distributed systems!

Part 3: The Architecture in Action

We implemented a Medallion Architecture to keep our data sane:

1.Bronze Layer: Raw data ingested from Kafka into S3 via Glue Streaming.

2.Silver Layer: Cleaned, filtered, and aggregated data.

3.Gold Layer: Business-ready data for reporting.

By using Glue Crawlers, we automatically discovered the schema of our streaming data, making it instantly queryable in Athena using standard SQL.

Conclusion: Fail Fast, Scale Later

Whether you are choosing a Lambda runtime or building a streaming pipeline, the lesson is the same: Context is King.

- Don't use Java on Lambda just because you know Java—use it because you need the performance, and be ready to optimize with GraalVM.

- Don't build a streaming pipeline if a daily batch job suffices—but if you do, ensure your networking game is strong.

Ready to modernize your data and compute stack? We don’t just read the documentation; we build, break, and fix these systems in production.

In this article:

Java Software Developer

Levi9 Romania

Java Software Developer

Levi9 Romania